To reduce the blurring in images taken in for example a fog, we need to identify the properties of these hidden photons. One important property is that these protons are more probably to come into the camera at a straight angle from the person in the photo. By only looking at light that travels straight ahead, you can easily remove all of the scattered light. Unfortunately, it's not that easy. It is not only the scattered photons but also photons with information of finer details of the person that are not coming in straight. This is the reason for why you need large objectives to get high-resolution images. There is actually a trade-off that must be made between how much of the scattered light you can remove and the resolution of the image you take. If you would have let through only the straight photons to remove the scattered photons, the resolution of your image will be very poor.

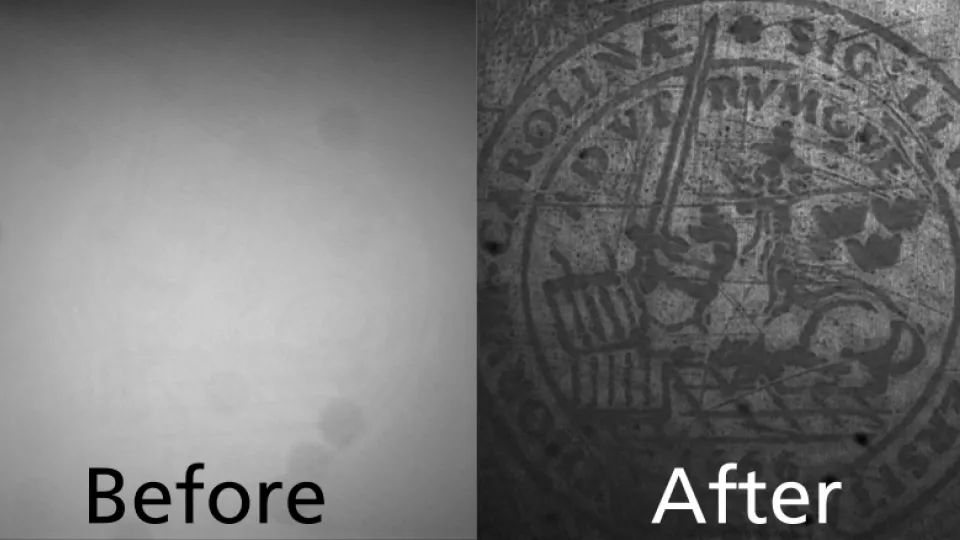

But what would happen if you took 100 low-resolution images at different angles and added them together? Each low-resolution image would contain different information of the image that can then be combined into a high-resolution image that maintains a high contrast. This could maybe make our pictures worthy enough to go online. The problem is that when you take many pictures after one another, you need to stay very still (and also all the birds in the background) so that the pictures are taken of the same scene. To avoid this, a technique can be used that divide the camera sensor into parts in order to take many pictures at the same time. The results shown in this work, takes several steps in that direction to be able to be used in different fields in physics, chemistry and biology.

For you reading this when taking profile pictures in the fog. It seems we are not fully ready to help you. You can however simply wait for the fog to clear.

This work was done by Sam Taylor.

Supervised by:

Elias Kristensson - portal.research.lu.se